Microsoft Virtual Academy...Something every Microsoft Developer Should Take A Look At!

There are many roads and avenues a tech-head can take to either get a grasp on new technology or prepare for certification. Unfortunately, some methods to get the knowledge on a subject can come at a great cost...especially when it comes to anything Microsoft.

Generally, Microsoft has always had some great forum and blogging communities to enable developers to get the expertise they require. I've always found them to be somewhat divided and looked rough around the edges. Now Microsoft has reworked its community and provided learners with a wide variety of courses freely available to anyone!

While MVA courses are not specifically meant to focus on exam preparation. They should be used as an addition to paid courses, books and online test exams to prepare for a certification. But it definitely helps. It takes more than just learning theory to pass an exam.

So if you require some extra exam training or just want to brush up your skills, give a few topics a go. I myself decided to test my skills by starting right from the beginning and covering courses that relate to my industry. In this case, to name a few:

- Database Fundamentals

- Building Web Apps with ASP.NET Jump Start

- Developing ASP.NET MVC 4 Web Applications Jump Start

- Programming In C# Jump Start

- Twenty C# Questions Explained

I can guarantee you'll be stumped by some of the exam questions after covering each topic. Some questions can be quite challenging!

I've been a .NET developer for around 7 years and even I had to go through the learning content more than once. Just because you've been in the technical industry for a lengthy period of time, we are all susceptible to forget things or may not be aware of different coding techniques.

One of the great motivations of using MVA is the ranking system that places you against a leaderboard of other avid learners and seeing yourself progress as you complete each exam. All I can advise is that don't let the ranking system be your sole motivation to just "show-off" your knowledge. The important part is learning. What's the point in making a random attempt to answer each exam without a deep understanding on why you got the answer correct or incorrect.

You can see how far I have progressed by viewing my MVA profile here: http://www.microsoftvirtualacademy.com/Profile.aspx?alias=2181504

All in all: Fantastic resource and fair play to Microsoft for offering some free training!

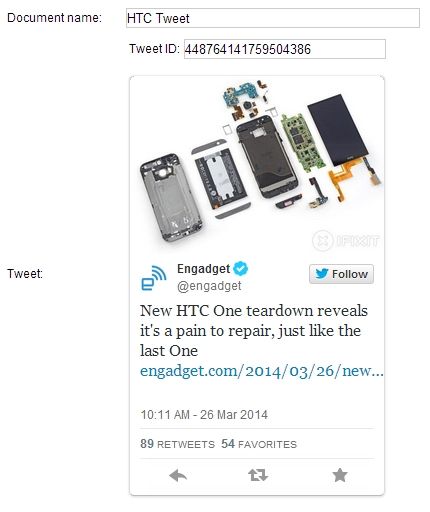

The plan originally was to ditch my current laptop, Alienware M11x R3 for something a little more recent with a better build quality. Even though my Alienware is an amazing workhorse for the type of work I do (with a very high spec), I started to get annoyed with the common issue these laptop's have: screen touching the keyboard when closed.

The plan originally was to ditch my current laptop, Alienware M11x R3 for something a little more recent with a better build quality. Even though my Alienware is an amazing workhorse for the type of work I do (with a very high spec), I started to get annoyed with the common issue these laptop's have: screen touching the keyboard when closed.